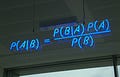

"Probability is orderly opinion... inference from data is nothing other than the revision of such opinion in the light of relevant new information." - Thomas Bayes

The process of hypothesis testing is used to determine whether a claim or assertion about a population is true or false. The process involves making an educated guess or assumption about the population, called the null hypothesis, and performing a statistical test to determine the likelihood of the null hypothesis being true. The objective of hypothesis testing is to make an educated decision about the validity of the claim or assertion based on the data and the results of the test.

Hypothesis testing is an essential element of scientific research, allowing researchers to make an informed decision about a particular claim or assertion. It helps researchers determine how likely it is that their claim or assertion is true, based on the data they have collected. To test a hypothesis, a clear understanding of the studied population, the variables of concern, and the type of data is required. Moreover, appropriate statistical tools and methods are necessary to analyze the data.

Once the data is collected and the appropriate statistical methods are determined, the process of hypothesis testing can be carried out. The process involves formulating the null hypothesis, an assumption about a population, and an alternative hypothesis, being the antithesis of the null hypothesis. The statistical methods are carried out to compare the data to the null and alternative hypotheses, to determine whether or not the null hypothesis is true. If the results of the test indicate a statistically significant difference between the null and alternative hypotheses, then the null hypothesis is rejected and the alternative hypothesis is accepted.

The results of the hypothesis testing process can be utilized to make an informed decision about a particular claim or assertion. By understanding the results of the test and interpreting them correctly, researchers, interpreters, and practitioners can make informed decision about the validity of their claim or assertion.

A p-value is a statistical measure used to assess the strength of evidence against a null hypothesis, and are used to determine whether the results of a study are statistically significant. It is the probability of obtaining a result equal to or more extreme than the one observed under the assumption that the null hypothesis is true. A low p-value indicates strong evidence against the null hypothesis and provides support for the alternative hypothesis. In other words, the lower the p-value, the stronger the evidence that the alternative hypothesis is true.

P-values are usually calculated from the sample data and are compared to a pre-defined significance level, also known as alpha. Alpha is the probability of rejecting the null hypothesis when it is actually true. If the p-value is less than alpha, the null hypothesis is rejected and the alternative hypothesis is accepted. If the p-value is greater than alpha, the null hypothesis is not rejected and the alternatives are not accepted.

Statistical power is the probability that a statistical test will detect an effect of a given magnitude when that effect truly exists. Put simply, having statistical power means that an experiment has the statistical power to detect true differences between two or more populations.

Statistical power yields insight into how reliable the results of a test are. If the power of a test is low, it means that it is unlikely to detect any effects that truly exist. Conversely, if the power of a test is high, it means that it is likely to detect any effects that truly exist. Statistical power is also important because it helps researchers determine the sample size needed for an experiment. The larger the sample size, the higher the probability of detecting a real effect.

Statistical power also helps to understand the trade-off between type I and type II errors. Type I errors occur when an experiment incorrectly identifies a false positive and type II errors occur when an experiment fails to identify a true positive. The greater the statistical power of a test, the lower the probability of making a type I error and the higher the probability of making a type II error.

It’s important that practitioners of applied sciences, like physicians, understand the technicalities and statistics behind clinical trials. But what’s even more important is to have an anchoring framework for not only interpreting and assimilating results and data but also for adjusting our notions and primers around the practices of importance.

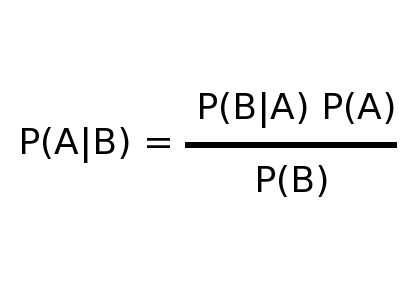

A realization to have after understanding how hypothesis testing works is that it’s all a matter of probabilities. A study showing or demonstrating anything with full certainty is an impossibility. As explained earlier, a statistically significant result implies that there’s a sufficiently low probability of our assumption being true for us to stop believing in what was assumed, and start believing in the opposite of our assumption. Or in other words, we reject the null hypothesis and accept the alternative hypothesis.

This doesn’t mean that the null hypothesis CAN’T be true. It means that in the context of the data, there’s a sufficiently low probability of the null hypothesis being true for us not to believe in it. Here, again, all about probabilities. The p-value yields us insight into the magnitude of the probabilities and is therefore of high importance.

Our notions and primers around subjects, practices, interventions, or approaches are created from subjective experience, studies, an understanding of the first principles, discourse, instinct, and more. Our notions and primers do always stand in conflict with competing ideas, and practitioners have to decide what approach to run with.

What studies can’t do is demonstrate with absolute certainty that an approach is false or wrong. Studies can either increase, decrease, or act neutrally on our confidence in the “trueness” of our beliefs and our strategy. A study supporting our approach or strategy should be put under scrutiny to establish the true extent to which it can increase our confidence in our practices, and should only be allowed to increase our confidence by that amount or less. In light of new evidence, it’s a straight-out intellectual fraud to update one’s notions in discordance with what the totality of the new data would suggest being “true”.

While we increase our confidence in an idea, it’s important to realize that our confidence in a competing idea being “true” should (and will by necessity) decrease by the same amount.

We act in the world as if we have full, 100%, confidence in our strategy. But what’s important to realize is that other people, just as successful as you anyone, have totally different strategies and still succeed. Is it just a coincidence? At some point, reality has to catch up with the probabilities and something horrible will happen, and the one with the “right” strategy will proudly point out the validity of their practices.

In situations where it’s impossible to act as if one has full confidence in their strategy, the best thing we can do is to follow the totality of the evidence, all things considered, and draw conclusions that allow us to be pragmatic and trust the process. It’s inevitable that we’ll experience events that deviate from the expected outcome of our strategy, but in the end, stacking the bets on what’s most probably “true” based on the available information is the strategy that will prevail.